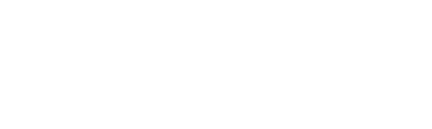

Dataset design combining MEG recordings and eye tracking with natural scene understanding. Adapted from Sulewski et al., 2025.

The Active Visual Semantics Dataset

The Active Visual Semantics (AVS) dataset provides a comprehensive neuroimaging resource combining magnetoencephalography (MEG), eye tracking, and semantic scene captioning to study active visual processing during natural scene exploration. Built upon scenes from the Natural Scenes Dataset (Allen et al., 2022), AVS captures the neural dynamics of fixation-locked visual processing across 4,080 natural images with high temporal resolution.

The AVS dataset addresses a critical gap in visual neuroscience by providing high-quality neural recordings during active, naturalistic vision. Unlike traditional paradigms requiring central fixation, AVS captures the neural dynamics of self-directed visual exploration.

AVS at a Glance

- 4,080 natural scenes from NSD (Allen et al., 2022)

- Free viewing + German sentence-level captioning

- Eye movement-locked event-related fields (ERFs) in MEG

- Individual head stabilisation & MRI-guided source modelling

- 5 participants × 10+ hours of recordings

- 235,000+ gaze events

- 4s scene presentation with eye tracking & MEG

- 306-channel MEG system

- 5,100 German scene descriptions (transcribed)

- Raw + preprocessed data with MNE-Python pipelines

Early access available

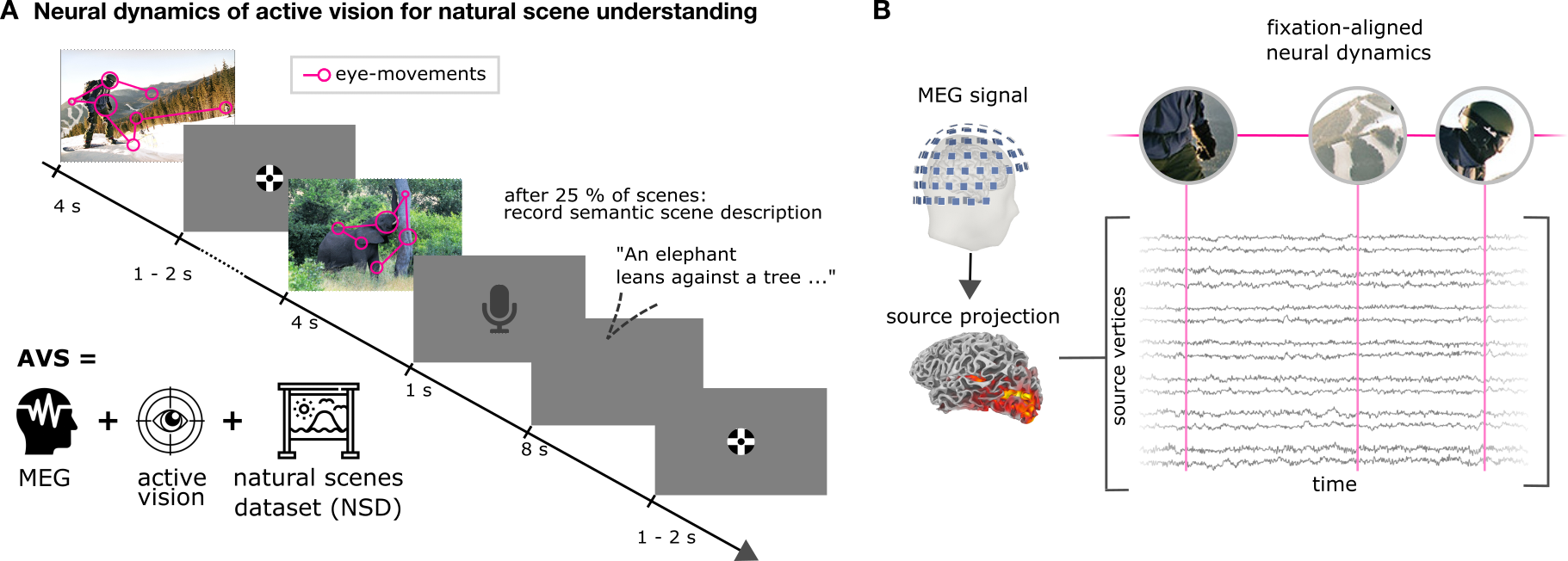

Balanced semantic scene sampling approach. Adapted from Sulewski et al., 2025.

Experimental Design & Technical Specifications

- 4,080 scenes from NSD

- 4s exposure per scene

- ~9 fixations recorded per scene

- 25% of scenes followed by captioning

- German verbal descriptions

- sBERT semantic embeddings

- 60 semantic clusters from MS-COCO captions

- Balanced sampling over clusters

- Includes NSD shared1000

- Fixation-locked MEG event-related fields

- Source-projected neural activity (dSPM)

- HCP & NSD region parcellations

- Eye movement parameters & sequences

- Object labels for fixated locations

- Rich trial metadata

Studies Using the AVS Dataset

Why we linger: Memory encoding, rather than visual processing demand, drives fixation timing on natural scenes – evidence from a large-scale MEG dataset

Sulewski, P., Amme, C., Hebart, M. N., König, P., & Kietzmann, T. C.

ResearchSquare, 2025 | doi:10.21203/rs.3.rs-7029247/v1

Demonstrates that longer fixations are driven by memory encoding rather than visual complexity, using multivariate MEG analysis and deep neural network modelling.

Saccade onset, not fixation onset, best explains early responses across the human visual cortex during naturalistic vision

Amme, C., Sulewski, P., Spaak, E., Hebart, M. N., König, P., & Kietzmann, T. C.

bioRxiv, 2024 | doi:10.1101/2024.10.25.620167

Shows that saccade onset, not fixation onset, better explains M100 responses in visual cortex during naturalistic vision.

Conference Contributions

Neural oscillations encode context-based informativeness during naturalistic free viewing

Bai, S., Sulewski, P., Amme, C., König, P., Kietzmann, T. C., Peelen, M. V., & Spaak, E.

Conference on Cognitive Computational Neuroscience (CCN), Amsterdam, 2025

Encoding of Fixation-Specific Visual Information: No Evidence of Information Carry-Over between Fixations

Amme, C., Sulewski, P., Braatz, M., König, P., & Kietzmann, T. C.

Conference on Cognitive Computational Neuroscience (CCN), Amsterdam, 2025

Gazing into memory: Active vision is timed to stabilise cortical representations for fixation-based memory encoding

Sulewski, P., Amme, C., Hebart, M., König, P., & Kietzmann, T. C.

European Conference on Visual Perception (ECVP), Aberdeen, 2024

The Active Visual Semantics Dataset – Understanding visual intelligence in action

Sulewski, P., Amme, C., Hebart, M., König, P., & Kietzmann, T. C.

NEAT 23, Osnabrück, Germany, 2023

- Allen, E. J., et al. (2022). A massive 7T fMRI dataset to bridge cognitive neuroscience and artificial intelligence. Nature Neuroscience, 25(1), 116-126.

- Lin, T.-Y., et al. (2014). Microsoft COCO: Common objects in context. Computer Vision–ECCV 2014, 740-755.

- Reimers, N., & Gurevych, I. (2019). Sentence-BERT: Sentence embeddings using Siamese BERT-Networks. arXiv:1908.10084.

- Glasser, M. F., et al. (2016). A multi-modal parcellation of human cerebral cortex. Nature, 536, 171-178.